All,

I invest in individual stocks and use ETFs for risk reduction and diversification. I wouldn’t be me if I did not look at a machine learning approach for ETF selection for risk reduction.

As a start–to avoid overfitting and cherry-picking–I used just SPY and TLT. I wanted to see if ML might improve on the old-school 60/40 split.

I started by using Portfolio Visualizer to find risk parity for SPY and TLT over the last 46 days. 46 days was arrived at by optimization. And I walked this forward (or Portfolio Visualizer did).

I then used various machine learning methods to find the probably that TLT would outperform SPY. Actually this is simplified for this post but is pretty accurate.

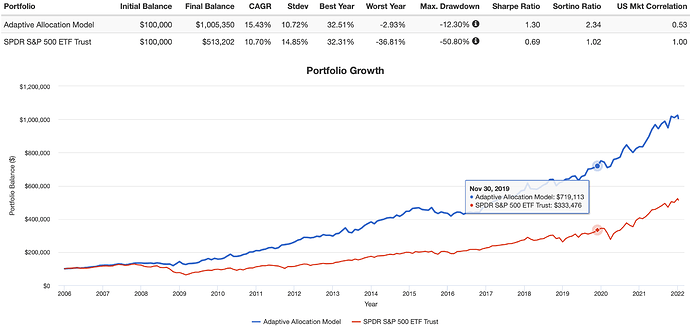

Using a Random Forest classifier gave good returns (better than SPY) and a good risk profile. But the Brier score or Brier skill score showed that is was not really a good predictor of the probability that TLT would outperform SPY. And the range of probability predictions was wide.

Logistic regression did better on the Brier skill score (better than the reference calcuation). And the probabilities were in a narrow range. Maybe too narrow to be very useful but it was realistic. As the literature would suggest, a Gaussian Naive Bayes Classifier was not so good for probabilities.

Finally, I just multiplied the proportions of SPY and TLT recommended using risk parity and by the probabilities of outperformance of each ETF using logistic regression and normalized it.

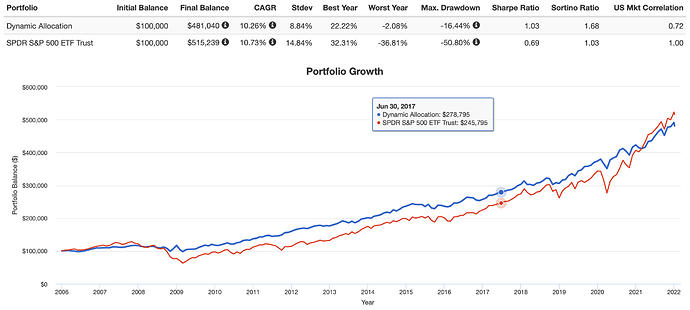

I uploaded this “dynamic allocation” back into Portfolio Visualizer. I got something that slightly underperformed SPY with less drawdown and a better Sharpe ratio (image).

I have reason to believe that by replacing SPY with a mixture of ETFs one can do better. SPY can be replaced, in part or completely, by individual stocks but also GLD or REIT ETFs to further reduce the volatility.

This can then be mixed with with whatever riskier, higher-beta strategy one believes in as part of a strategy to control risk. Or someone might mix this with a more conservative fund (like AGG). Again, to approximate whatever risk profile one desires.

This is a first attempt that, probably, I will not end up using. I spent maybe 2 hours on this and I will make a guarantee that it is flawed at this point. Pros like Chaim, who recently posted on risk reduction, probably have better strategies at the end of the day. Other people with finance degrees (who are not necessarily in the business of constructing portfolios for customers) have also posted on this and probably have some more developed ideas.

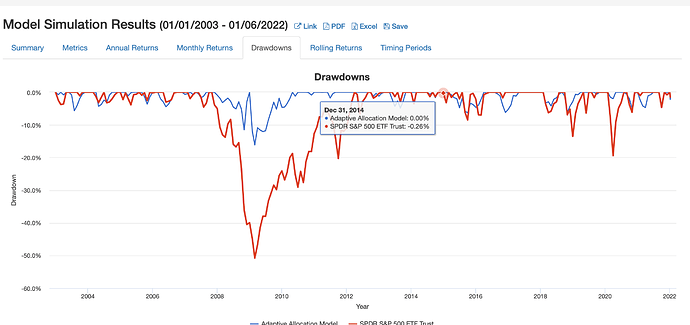

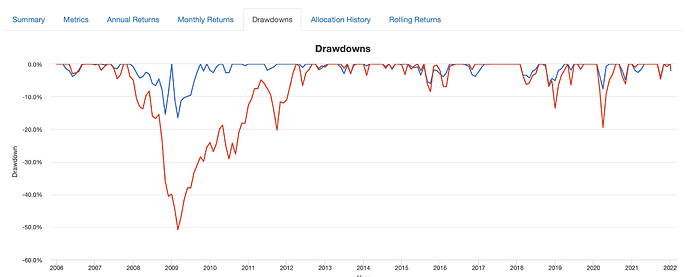

Edit: FWIW here are the drawdowns for just risk parity alone (first) and then AI discussed above. Not much difference. The AI had better annualized returns and a better Sharpe ratio (compared to risk parity alone) but it was not dramatic. This is consistent with the ranges in probability predicted by the logistic regression being narrow (above). I don’t know if this can be improved upon. As I said a first look that I am not selling or even recommending to anyone.

Personally, I’m still not funding it. Some of my portfolio intended for risk management is managed by professionals and you might consider doing the same if you are investing in some high-beta strategies (as I do).

Jim