Andreas and Yuval -

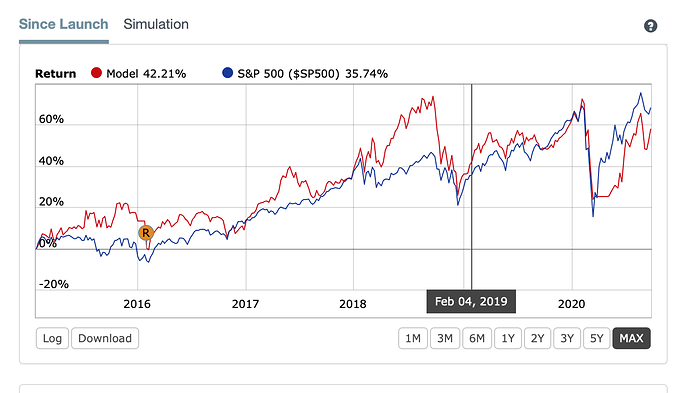

Many years ago, I wrestled with the same issues you are experiencing today, Andreas. A backtest that I created performed exceptionally well. However, when I reviewed its performance and saw the OOS results a few months later, the performance was significantly worse, without making any changes—just hitting’rerun.’ I was astonished that results could change so radically—seemingly always for the worse—without making a single change to the Sim.

At the time, I made a post similar to yours in which I sought out reasons for this confidence-shaking lack of consistency. Several members chimed in – confirming they had the same experience as me, and their confidence was equally shaken. However, now I believe that blaming Portfolio123 for the problem was misguided.

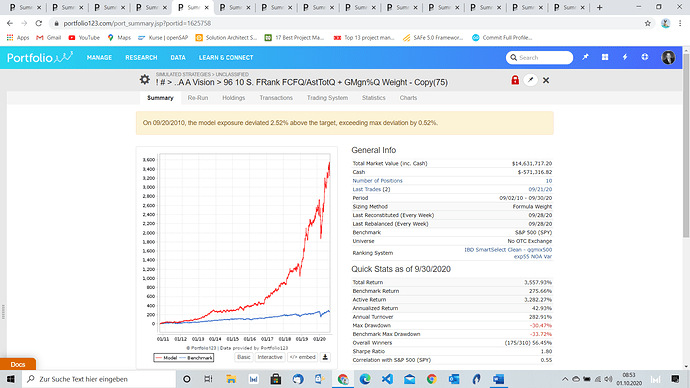

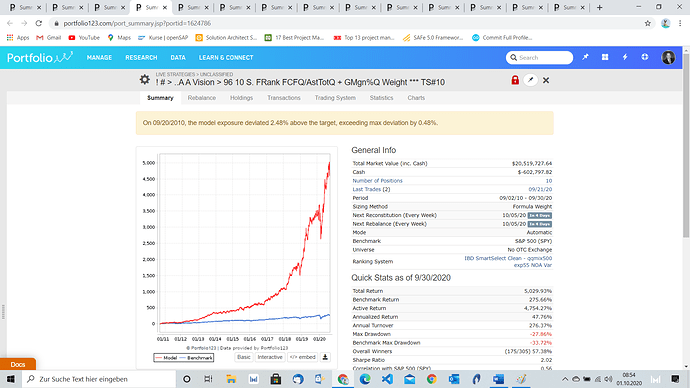

I discovered that substantial performance differences could occur in backtests if there is just a slight change near the beginning of the run. A 20-year backtest, with a (seemingly) inconsequential change of a few dollars in the entry price of an equity or ETF, could result in an enormous swing in total performance and a significant Annual Return difference of 10% to 30% by the end of each year.

Einstein called compound interest “The greatest force in the universe,” with its phenomenal results based on the amount of time under consideration. Many call compounding ‘magic’ because compound interest involves earning profits on the principle plus the profits added during the previous period (and the period before that, and the period before that, etc.). Unlike most things in life, with compounding, time is our best friend.

Of course, this principle also works in the opposite direction when we compound losses. An insignificant, share-price difference of a dollar or three at the start of a 20-year investment of $100,000 will result in an enormous decline in results.

This principle is the well-known-in-quant-circles ‘Butterfly Effect,’ which is the sensitive dependence on initial conditions. A minute change in one state of a deterministic nonlinear system can result in large differences in a later state.

For this reason, I always use a wide range of starting dates as one of many tests for robustness. A (for example) three-day difference in the start date of a backtest can easily mean a (for example) plus/minus $3 or more difference in the share price of a stock or ETF purchased. That minuscule $3 difference in share price; for example, 2,000 shares of SPY at $47 vs. 2,000 shares of SPY at $50, compounds to become a difference of $1,140,297 over 20 years at an average Annualized Return of 30% — i.e., $19,004,964 becomes $17,864,666 at the terminal date because of that $3 difference in the initial state.

Moreover, a difference in ‘Point-in-Time’ (PIT) data with FactSet could result in a price difference in the initial state that will compound over the years to become a substantial difference at the terminal date. Still, we don’t have enough information to know how to handle this potentiality. I agree with Andreas that we need to have the PIT issue with FactSet explained in detail.

I agree 100% with Andreas, hoping that P123 will provide us with the details of this PIT discrepancy—assuming there is a difference. At this point, it isn’t a deal killer for me because my OOS results continue to match in-sample testing. However, users need to know the details of this potential difference to adjust and accommodate a discrepancy between the prior PIT data and FactSet PIT data.