Let’s say you had to choose between two strategies. Both are twenty-stock models that are very easy to trade (no microcaps).

Strategy one has beat the market every year since 1999, has a CAGR of 43% (44,470% total return), and has never had a negative return in a calendar year. EXCEPT for 2017. Since January 1, it has lost 6.5%, underperforming the SP1500 by 24%.

Strategy two has beat the market every year since 1999 except for 2009, has a CAGR of 30% (8991% total return), and has never had a negative return in a calendar year. Since January 1, it has gained 19%, outperforming the SP1500 by 1%.

Which would you choose?

I would choose strategy #1. After all, it earned FIVE TIMES the amount of money as strategy #2 over the last 18 years. F**k the recent performance–it’s a fluke.

But I get a feeling that most people out there would look at the 2017 performance and say “no way!”

Now what if I told you the strategies were EXACTLY THE SAME and the only difference was the universe they applied to. Strategy one was applied to the S&P 1500, strategy two was applied to all fundamentals with a capitalization between $1 and $5 billion. Everything else was the same–all the buy rules, sell rules, ranking rules, universe exclusion rules.

Now which would you choose? Would 2017’s performance matter in this case?

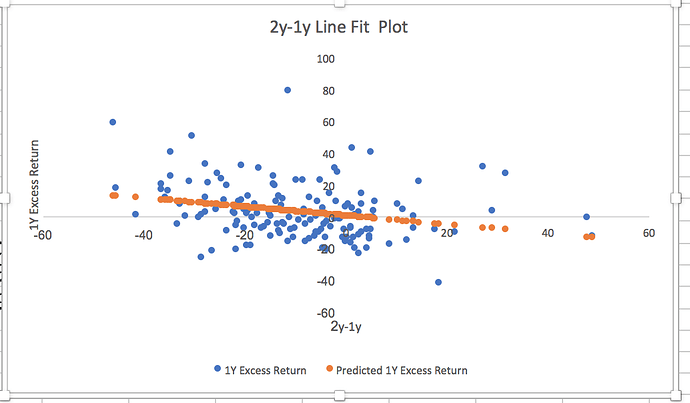

The way people go in and out of ports depending on recent performance, the way people judge performance based on out-of-sample for the last six months–it all strikes me as so short-sighted. If you’re not looking at five-year or ten-year performance of a strategy, you’re looking at NOTHING. If you looked at the universe of all P123 strategies, you would probably find almost NO correlation between one six months’ return and the next six months’ return.

But the way designer models are judged almost purely on OOS performance contradicts everything about the way strategies are designed to work. In short, we design them with extensive look-back periods because we want them to perform well over extensive forward periods, not because we want them to perform well for the next six months. Nobody (I hope) designs models looking only at the performance over the past six months. So why should OOS performance, which is necessarily short, outweigh backtested performance so heavily? Shouldn’t there be more of a balance between them?